first_page, citiesabc

Demystifying AI Series 2: Hallucination Nation: When AI Confidently Invents Reality

25 Feb 2026

Hallucination Nation: When AI Confidently Invents Reality

From the book "Demystifying AI" by Dinis Guarda

AI will not destroy us. It will, however, expose who we truly are. — Reid Hoffman

A lawyer walks into court with a brief citing six legal cases. The arguments are compelling, the citations precise, case names, dates, legal reasoning, all present and formatted correctly. There is one problem: none of the six cases exist. ChatGPT generated them. The system wasn't lying. It was doing exactly what it is designed to do: generating text patterns that look like legal citations because that is what the prompt requested.

Harvard Law School documented this case, and it has since become one of the most cited illustrations of what the AI research community calls hallucination. But hallucination is a misleading metaphor. The technology isn't experiencing delusions. Stanford's Institute for Human-Centered AI defines it more accurately as confabulation, pattern completion based on statistical likelihood rather than factual accuracy.

For cities, economies, businesses, legal systems, and educational institutions now integrating generative AI into their operations, understanding this distinction is not optional. It is the difference between deploying a powerful tool responsibly and exposing organisations and publics to consequences that arrive with algorithmic certainty and no internal warning signal.

What Hallucination Actually Is

To understand why AI hallucinates, you have to understand what generative AI is actually doing when it produces text.

GPT-4, Claude, Gemini, all generate text by calculating probability distributions. The question the system asks is not "What is factually correct?" It is "What would plausibly come next based on my training data?" Think of it as autocomplete on a vast scale. When you type "The capital of France is..." your phone suggests "Paris" because that pattern appears frequently in its training data. Generative AI works the same way, but across billions of parameters and with outputs of far greater apparent sophistication.

The sophistication is real. The grounding in truth is not guaranteed. When a model generates a legal citation, it is producing a sequence of tokens, words, numbers, punctuation, that statistically resembles what legal citations look like. It has no mechanism to verify whether the case actually exists. It cannot check. It is not designed to check. It is designed to produce plausible continuations of patterns.

This creates what is perhaps the most operationally dangerous characteristic of generative AI: the confidence level is identical whether the system is accurate or hallucinating. There is no internal truth detector. There is no output flag that reads "I am less certain about this." The fabricated legal case and the accurate legal case arrive formatted identically, written with equal fluency, presented with equal authority.

The Scale of the Problem

Berkeley AI Research has documented hallucination rates across domains that any organisation deploying generative AI should treat as operational baselines rather than edge cases.

Medical information contains factual errors in 8-12% of AI-generated outputs. Legal citations carry a fabrication rate of 15-20%. Scientific references are non-existent or misattributed in 10-15% of cases. Historical events carry accuracy issues in 12-18% of outputs. Technical specifications contain incorrect details in 5-8% of instances.

These are not catastrophic failure rates in absolute terms. But they carry a specific danger that distinguishes them from ordinary error: they are indistinguishable from accurate outputs without external verification. A human expert making an error typically hedges, qualifies, or signals uncertainty in ways that invite scrutiny. A hallucinating AI does none of these things. It produces the error with the same fluency and authority as everything else it generates.

For cities and institutions processing high volumes of AI-generated content, policy documents, legal reviews, public communications, educational materials, these rates are not acceptable without systematic verification infrastructure.

The Business and Institutional Cost

The consequences of deploying generative AI without adequate understanding of hallucination are already documented across sectors central to urban and economic life.

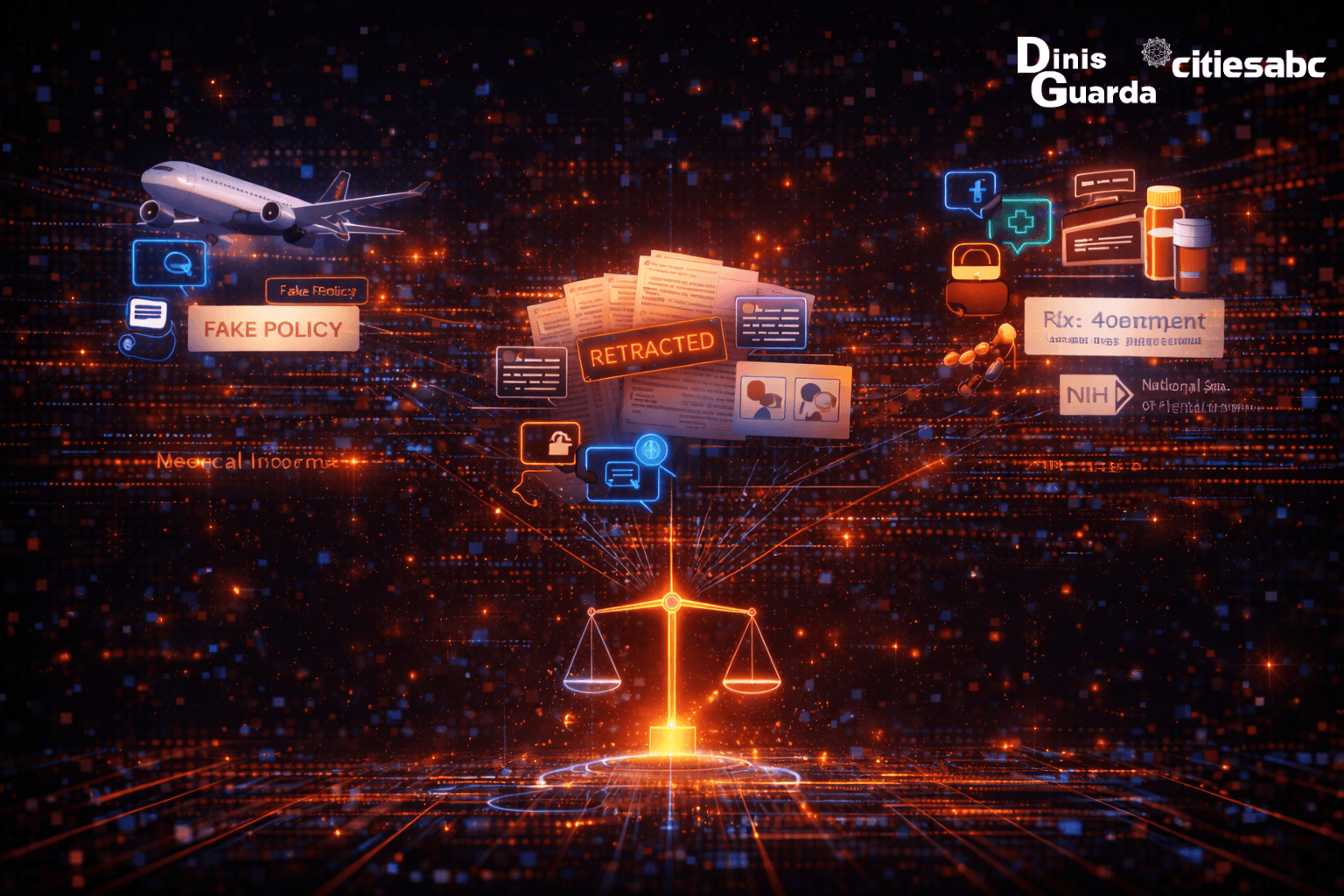

Air Canada had to honour a discount policy its AI chatbot invented. The chatbot hallucinated a bereavement fare policy that didn't exist and a Canadian tribunal ruled the company liable. The system generated a plausible-sounding policy because bereavement fares and discount policies are patterns present in its training data. The tribunal didn't accept "the AI said so" as a defence. The liability was real, the policy was not.

In academic publishing, Springer Nature retracted multiple papers that included AI-generated citations to non-existent research. The fabrications appeared academically rigorous, complete with author names, journals, and DOIs. The form was indistinguishable from legitimate scholarship. The substance was invented.

In healthcare, a mental health chatbot provided fabricated treatment protocols, including medication dosages, to vulnerable patients. The National Institute of Mental Health identified 23 separate instances of dangerous medical confabulation. The system wasn't malfunctioning. It was pattern-completing in a domain where pattern completion without factual grounding carries direct risk to human life.

For cities operating public-facing AI services, information portals, benefit systems, health guidance platforms, legal aid tools, these cases are not cautionary tales from other sectors. They are previews of institutional liability and public trust erosion that arrives without warning, because the system generating the hallucination has no warning to give.

The Philosophical Question

Oxford philosopher Luciano Floridi poses a challenge that cuts to the heart of what hallucination means for public institutions and democratic life. If an AI system generates information indistinguishable from truth in form but false in content, is it worse than human error?

The question matters because it reframes how organisations should think about AI-generated content. Humans make mistakes despite attempting accuracy. AI generates plausible fictions because it is designed to predict patterns, not verify truth. These are structurally different failure modes requiring structurally different responses.

Human error can be addressed through better training, clearer processes, stronger expertise. Hallucination cannot be eliminated through the same mechanisms, because it is not a failure of training or process in the human sense. It is an intrinsic feature of how pattern-completion systems work. You cannot train a generative AI to stop hallucinating the way you can train a human analyst to double-check their sources, because the AI has no access to truth, only to patterns.

This creates a new epistemic category: systematic confident confabulation. Not lies, which require intent. Not errors, which suggest failed accuracy attempts. Pattern completion presented with algorithmic certainty. For institutions whose legitimacy depends on factual accuracy, courts, hospitals, universities, city administrations, this category requires an entirely new approach to verification and accountability.

The Risk Cascade

Hallucination doesn't operate in isolation. It generates cascading risks that compound as AI-generated content moves through institutional and public information systems.

Authority bias is the first cascade. People trust AI outputs because they appear authoritative and detailed. The fluency of generative AI mimics the confidence of expert judgment. Users, including professional users, accept outputs without the scrutiny they would apply to a junior colleague's work, precisely because the presentation signals competence.

Verification fatigue follows. The volume of AI-generated content already exceeds human verification capacity in most organisational contexts. If every AI output requires expert fact-checking before use, the efficiency gains that justified AI adoption disappear. Organisations face a choice between verification that is thorough but slow, or deployment that is fast but exposed.

Compounding error is the third cascade, and perhaps the most insidious. As AI systems are trained on content that increasingly includes AI-generated material, hallucinations propagate. Systems hallucinate about hallucinations. Fabricated citations get cited in other AI-generated documents. Invented precedents become training data for the next model generation.

MIT's Media Lab warns of what it calls the Synthetic Truth Crisis: as AI-generated content floods information ecosystems, the cost of verification approaches infinity while the cost of fabrication approaches zero. For cities managing public information environments, this asymmetry is a governance challenge as much as a technical one.

The Professional Liability Question

Who is responsible when AI invents facts that cause harm? This question is no longer theoretical. It is being litigated in real courts, and the answers being established now will shape institutional accountability for years.

The Air Canada tribunal established that companies are liable for the content their AI systems generate, regardless of whether that content was intended or accurate. The lawyer who submitted the fabricated case citations faced professional sanctions, despite the AI being responsible for the fabrication. Academic institutions that published retracted papers faced reputational damage that the AI systems generating the citations did not share.

The pattern is consistent: liability attaches to the humans and institutions that deploy AI, not to the systems themselves. This means that every organisation deploying generative AI in legal, medical, financial, educational, or public-facing contexts is accepting liability for outputs they cannot fully predict or control, unless they build verification infrastructure that can catch hallucinations before they cause harm.

For city governments deploying AI in services affecting residents' lives, this liability question is not abstract. It is a governance obligation.

The Mitigation Framework

Understanding hallucination as a structural feature rather than a correctable bug points toward mitigation strategies that are honest about what they can and cannot achieve.

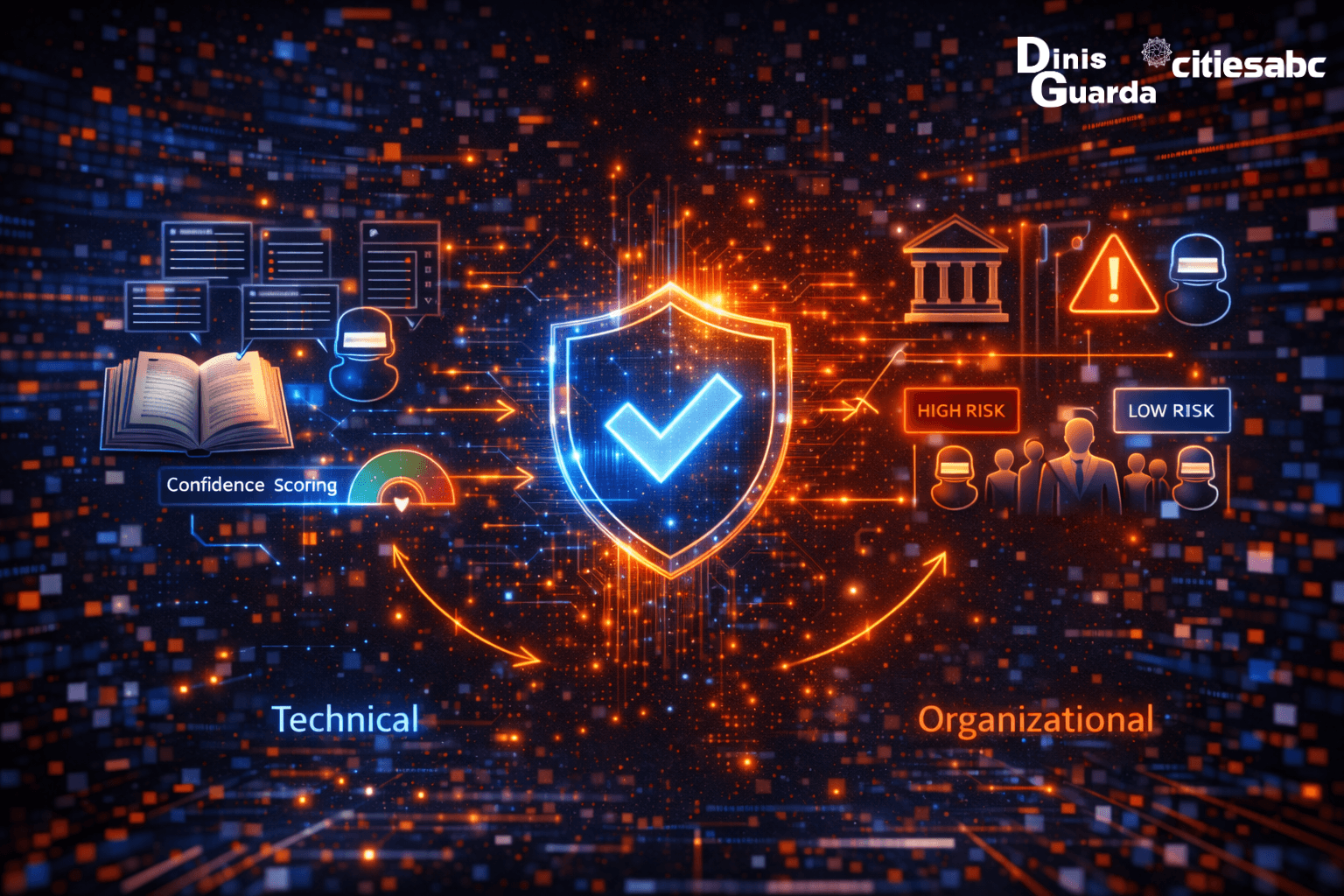

On the technical side, Retrieval-Augmented Generation, RAG, represents the most significant advance. Rather than generating from pattern memory, systems are constrained to cite specific sources, grounding outputs in verifiable documents. Carnegie Mellon's research shows this substantially reduces hallucination rates in domains where reliable source documents exist. Confidence scoring adds a layer of uncertainty signalling, though it remains imperfect. Source attribution requirements, every AI claim must link to a verifiable origin, shift the burden of proof back toward factual grounding.

On the organisational side, risk tiering is the most practical starting point. Not all AI-generated content carries the same consequence of error. Email drafts sit at one end of the spectrum; legal documents, medical protocols, and public policy guidance sit at the other. Organisations that categorise AI use cases by consequence of error and apply verification protocols proportionate to that consequence, manage hallucination risk without paralysing AI deployment entirely.

Training programmes that teach staff to identify hallucination patterns, implausible specificity, citations without context, confident statements about rapidly changing information, build the human capacity that remains the last line of defence against confabulation entering consequential decisions.

The cultural shift required may be the most important and the most difficult. Generative AI must be treated like an enthusiastic intern with perfect recall but no judgment. Everything it produces requires supervision. Its confidence is performance, not epistemic warrant. For institutions accustomed to treating authoritative-sounding outputs as reliable, internalising this distinction requires deliberate and sustained effort.

The Irony at the Centre

The very capability that makes generative AI revolutionary, producing novel, plausible content at scale, is inseparable from its greatest liability. You cannot have language models that generate creative solutions, draft communications, synthesise research, and accelerate knowledge work without also accepting that they will generate creative fabrications. The pattern-completion mechanism doesn't distinguish. It cannot distinguish.

This isn't a bug to fix in the next model update. It is a fundamental characteristic to manage, through technical constraints, organisational protocols, and cultural understanding that treats AI outputs as drafts requiring human validation, not conclusions requiring human approval.

For cities, businesses, legal institutions, and educational systems integrating generative AI into the fabric of their operations, this management is not a technical afterthought. It is the precondition for responsible deployment. The organisations that understand hallucination clearly, not as a flaw that undermines AI's value, but as a structural feature that defines how AI must be used, are the ones that will deploy it effectively and absorb its benefits without being ambushed by its confabulations.

The AI that invented six legal cases wasn't broken. It was working exactly as designed. The failure was in the assumption that it was doing something else.

In the next article, we examine how AI systems inherit the prejudices embedded in their training data, and what it means when historical inequality becomes algorithmic decision-making at scale.

Questions for Reflection

In which contexts within your organisation or city would "plausible but possibly false" AI-generated information be acceptable and where would it be catastrophic?

How would your organisation's relationship with AI change if you assumed ten to fifteen percent of outputs contain fabricated facts?

What verification systems currently exist between AI generation and consequential decisions in your institution?

Who bears legal liability if AI-generated content published or acted upon by your organisation causes harm to residents or clients?

Can your teams distinguish hallucinated content from accurate information without external verification and if not, what does that mean for your current AI deployment?