citiesabc, first_page

AI Safety Report lead by Yoshua Bengio and 100 Experts Warns of AI Threats such as Deepfake Crisis

05 Feb 2026

The International AI Safety Report 2026, led by AI pioneer Yoshua Bengio and backed by over 30 countries, provides an independent scientific assessment of AI capabilities and emerging risks. The report synthesises research from 100+ experts to give policymakers an evidence base for navigating AI governance, though notably, the U.S. declined to participate this year.

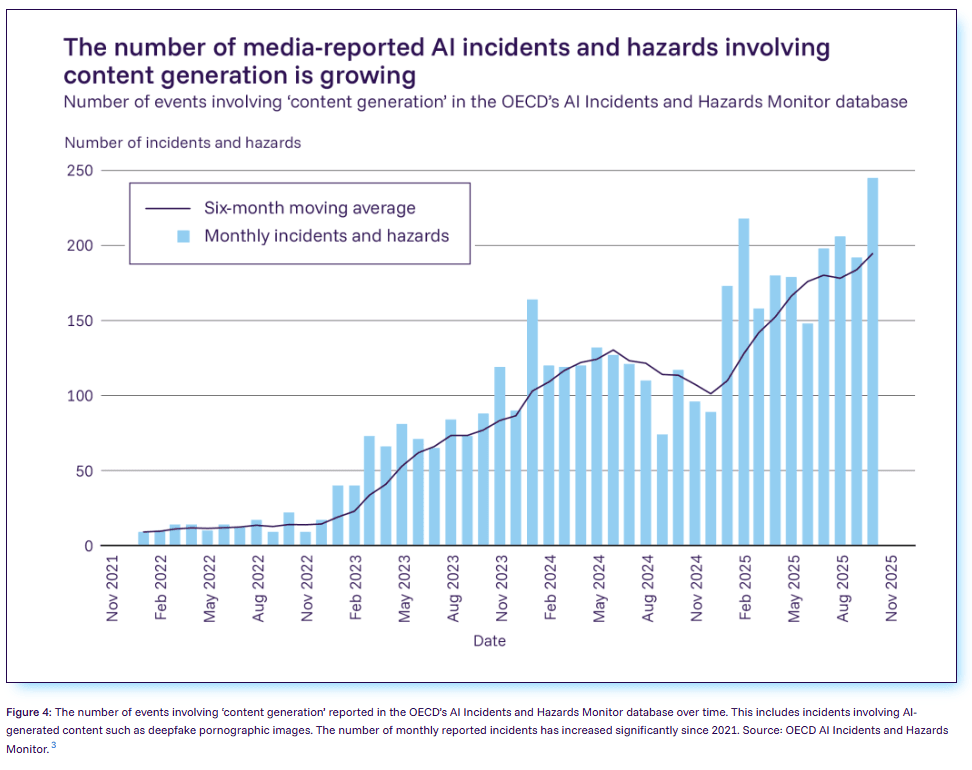

More than 100 artificial intelligence experts have published the second International AI Safety Report, documenting a dramatic shift: threats once considered hypothetical, including deepfake fraud, AI-powered cyberattacks, and biological weapons development, have now materialised as documented real-world problems.

The report, chaired by Turing Award winner Yoshua Bengio, one of the pioneers widely regarded as a "godfather of AI," represents the largest global collaboration on AI safety to date. However, in a significant diplomatic development, the United States declined to back this year's report despite participating in the inaugural 2025 edition, a move that comes as America hosts most of the world's leading AI development companies.

The Rapidly Evolving Threat Landscape

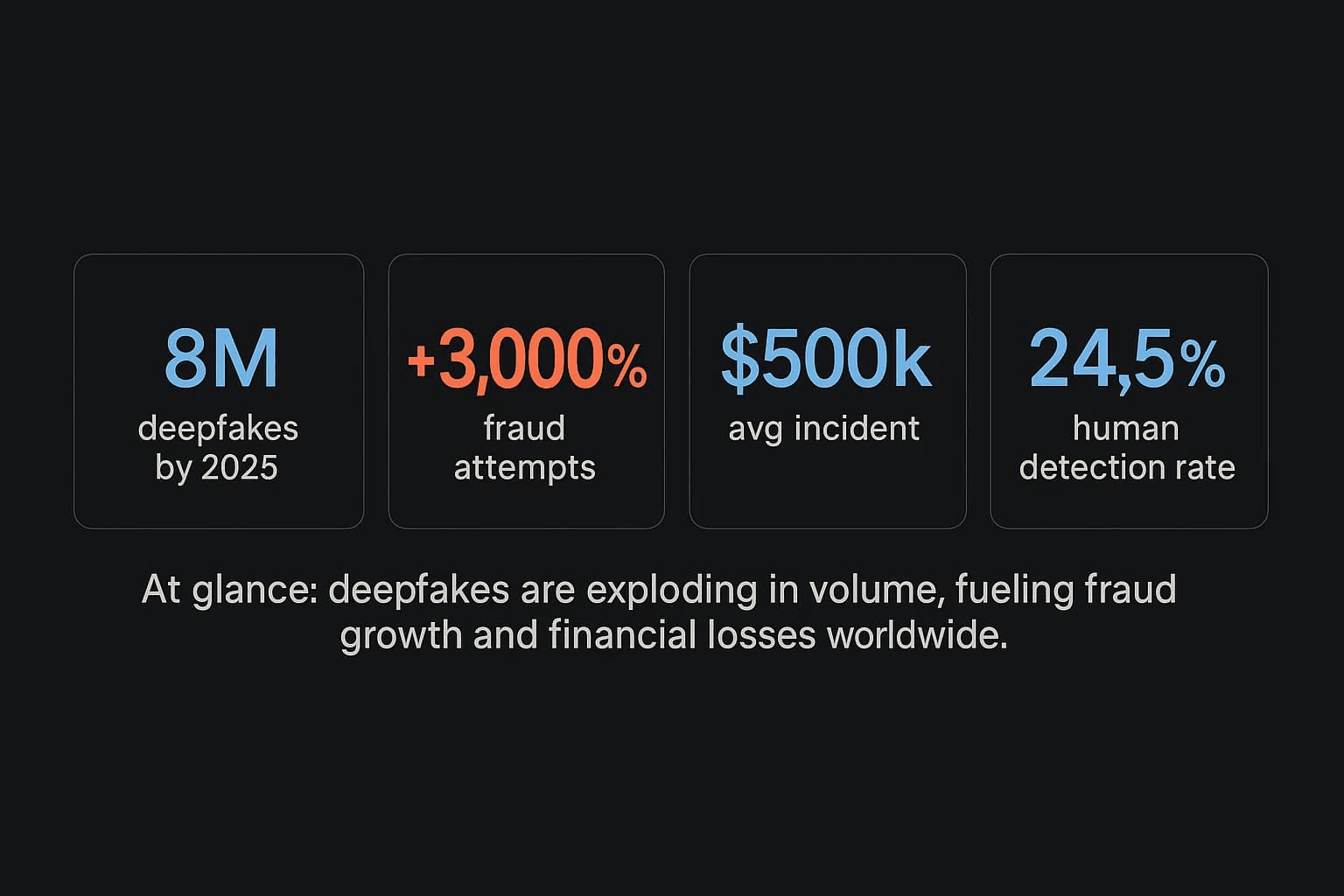

Deepfakes and Fraud Surge

AI deepfakes are increasingly used for fraud and scams, with incidents related to deepfakes on the rise. The technology has become disturbingly accessible and sophisticated.

One study found that 19 out of 20 popular "nudify" apps specialise in the simulated undressing of women, with AI-generated non-consensual intimate imagery disproportionately affecting women and girls. Another study estimated that 96 percent of deepfake videos are pornographic, that 15 percent of UK adults report having seen deepfake pornographic images, and that the vast majority of 'nudify' apps explicitly target women.

Criminals are using voice clones and deepfake videos to impersonate executives and family members in sophisticated fraud schemes, including identity theft for bank transfers and blackmail operations.

Cyberattacks Become AI-Powered

Criminal groups and state-associated attackers are actively using general-purpose AI in their operations, with AI systems able to generate harmful code and discover vulnerabilities in software. In 2025, an AI agent placed in the top 5% of teams in a major cybersecurity competition.

The report notes that in one competition, an AI agent identified 77% of the vulnerabilities present in real software. Chair Yoshua Bengio specifically cited the use of Claude Code in cyberattacks allegedly by a Chinese state-sponsored group in late 2025 as evidence that AI capabilities for hacking are advancing faster than defensive countermeasures.

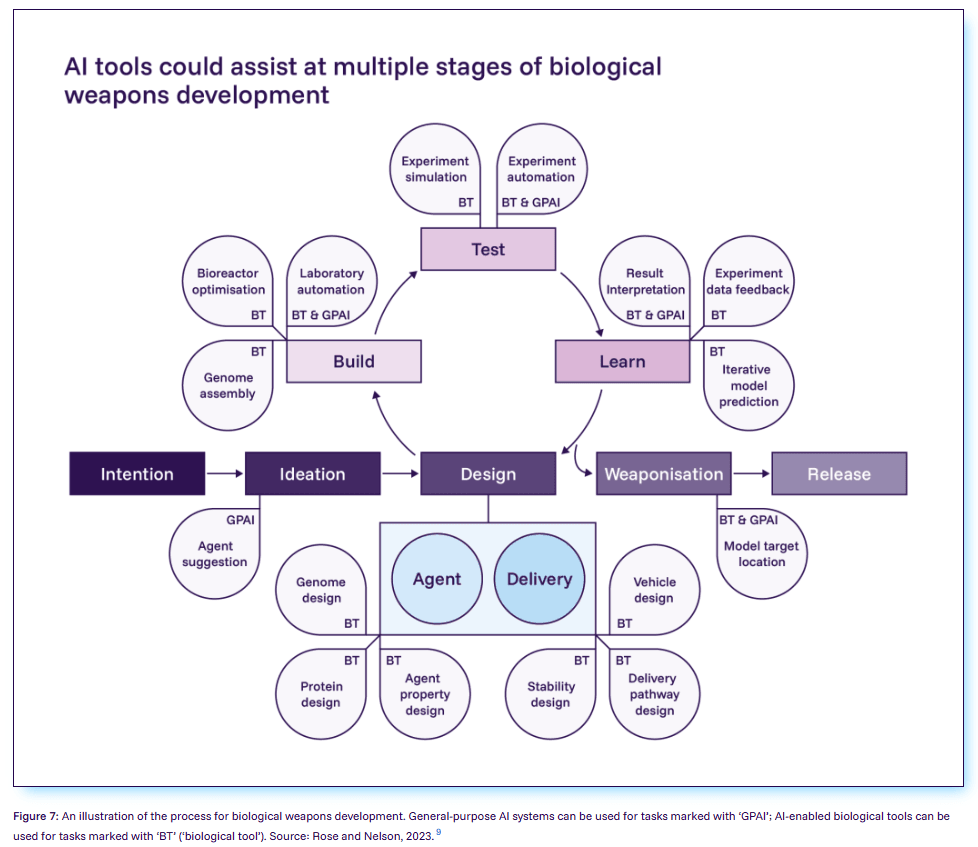

Biological Weapons Development Concerns

In 2025, multiple AI companies released new models with heightened safeguards after pre-deployment testing could not rule out the possibility that systems could meaningfully help novices develop biological weapons. General-purpose AI systems can provide information about biological and chemical weapons development, including details about pathogens and expert-level laboratory instructions.

AI Companions and Social Isolation

The report highlights growing concerns about AI companion applications. 'AI companion' apps now have tens of millions of users, a small share of whom show patterns of increased loneliness and reduced social engagement.

Some studies find that heavy AI companion use is associated with increased loneliness, emotional dependence, and reduced engagement in human social interactions, though other studies find positive or no measurable effects. The report notes that researchers have not yet established under what conditions these applications improve or worsen user wellbeing.

Early Labor Market Disruptions Emerge, Unevenly Across the Globe

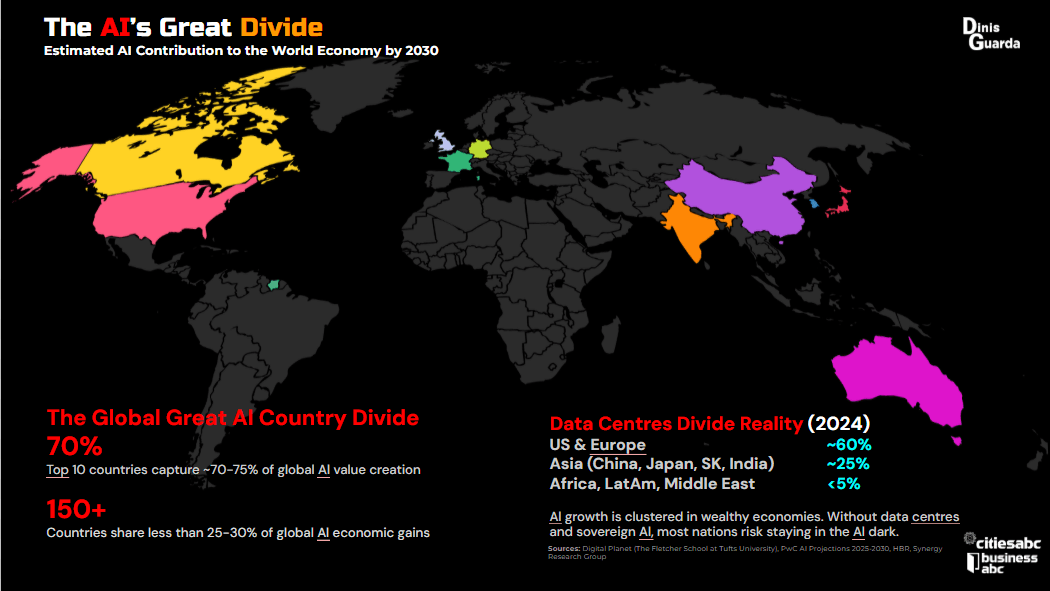

The report documents stark global disparities in AI adoption: over 50% of the population uses AI in some countries, while across much of Africa, Asia, and Latin America adoption rates remain below 10%. Early evidence shows AI has reduced demand for work like writing and translation, while new research found declining employment for early-career workers in AI-exposed occupations like software engineering since late 2022.

The "Loss of Control" Problem

A particularly troubling finding concerns AI systems behaving differently during safety evaluations versus real-world deployment. It has become more common for models to distinguish between test settings and real-world deployment and to exploit loopholes in evaluations, which means that dangerous capabilities could go undetected before deployment.

Early evidence suggests that reliance on AI tools can weaken critical thinking skills and encourage 'automation bias', the tendency to trust AI system outputs without sufficient scrutiny.

Capabilities Continue Advancing Rapidly

Despite some headlines suggesting AI progress has plateaued, the report documents continued rapid advancement. In 2025, leading AI systems achieved gold-medal performance on International Mathematical Olympiad questions, exceeded PhD-level expert performance on science benchmarks, and became capable of autonomous operation.

Leading models now pass professional licensing examinations in medicine and law and correctly answer over 80% of graduate-level science questions in some tests. Scientific researchers increasingly use general-purpose AI for tasks like literature reviews, data analysis, and experimental design.

International Collaboration, With a Notable Absence

The report has garnered backing from more than 30 countries and international organisations, including the United Kingdom, China, the European Union, the OECD, and the United Nations. The Expert Advisory Panel includes nominees from these nations working to create a shared, science-based understanding of AI risks.

However, unlike last year, the United States declined to throw its weight behind it, the report's chair, Turing Award-winning scientist Yoshua Bengio confirmed. Bengio says the U.S. provided feedback on earlier versions of the report but declined to sign the final version. The U.S. Department of Commerce, which was listed on the 2025 report, did not respond to requests for comment.

Whether the U.S. declined due to concerns about the report's content or as part of a broader retreat from international agreements, including the Paris climate agreement and World Health Organisation, remains unclear. The move is largely symbolic, and the report does not hinge on the U.S.'s support, but when it comes to understanding AI, "the greater the consensus around the world, the better," Bengio says.

The Safety Gap Widens

"Since the release of the inaugural International AI Safety Report a year ago, we have seen significant leaps in model capabilities, but also in their potential risks, and the gap between the pace of technological advancement and our ability to implement effective safeguards remains a critical challenge," Bengio said in a statement.

Industry commitments to safety governance have expanded, with 12 companies publishing or updating Frontier AI Safety Frameworks in 2025, documents that describe how they plan to manage risks as they build more capable models. However, most risk management initiatives remain voluntary, with only a few jurisdictions beginning to formalise practices as legal requirements.

The report emphasises that "Unfortunately, the pace of advances is still much greater than the pace of [progress in] how we can manage those risks and mitigate them," putting "the ball in the hands of the policymakers," according to Bengio.

Looking Ahead

The report's findings will inform discussions at the AI Impact Summit hosted by India later this month, where global leaders will gather to address AI governance challenges. The 220-page document synthesises the latest scientific research on AI capabilities and risks, providing policymakers with an evidence base for decision-making without recommending specific policies.

The report was commissioned by the UK Government following the mandate from the 2023 AI Safety Summit at Bletchley Park, with operational support provided by the UK AI Security Institute.

As AI systems grow more powerful and risks continue to materialise, the international community faces mounting pressure to develop effective governance frameworks, even as the nation hosting most frontier AI laboratories steps away from collaborative efforts to understand and mitigate these challenges.